The GM204 Architecture

NVIDIA’s new GM204 GPUs are finally revealed.

James Clerk Maxwell's equations are the foundation of our society's knowledge about optics and electrical circuits. It is a fitting tribute from NVIDIA to include Maxwell as a code name for a GPU architecture and NVIDIA hopes that features, performance, and efficiency that they have built into the GM204 GPU would be something Maxwell himself would be impressed by. Without giving away the surprise conclusion here in the lead, I can tell you that I have never seen a GPU perform as well as we have seen this week, all while changing the power efficiency discussion in as dramatic a fashion.

To be fair though, this isn't our first experience with the Maxwell architecture. With the release of the GeForce GTX 750 Ti and its GM107 GPU, NVIDIA put the industry on watch and let us all ponder if they could possibly bring such a design to a high end, enthusiast class market. The GTX 750 Ti brought a significantly lower power design to a market that desperately needed it, and we were even able to showcase that with some off-the-shelf PC upgrades, without the need for any kind of external power.

That was GM107 though; today's release is the GM204, indicating that not only are we seeing the larger cousin of the GTX 750 Ti but we also have at least some moderate GPU architecture and feature changes from the first run of Maxwell. The GeForce GTX 980 and GTX 970 are going to be taking on the best of the best products from the GeForce lineup as well as the AMD Radeon family of cards, with aggressive pricing and performance levels to match. And, for those that understand the technology at a fundamental level, you will likely be surprised by how much power it requires to achieve these goals. Toss in support for things like a new AA method, Dynamic Super Resolution, and even improved SLI performance and you can see why doing it all on the same process technology is impressive.

The NVIDIA Maxwell GM204 Architecture

The NVIDIA Maxwell GM204 graphics processor was built from the ground up with an emphasis on power efficiency. As it was stated many times during the technical sessions we attended last week, the architecture team learned quite a bit while developing the Kepler-based Tegra K1 SoC and much of that filtered its way into the larger, much more powerful product you see today. This product is fast and efficient, but it was all done while working on the same TSMC 28nm process technology used on the Kepler GTX 680 and even AMD's Radeon R9 series of products.

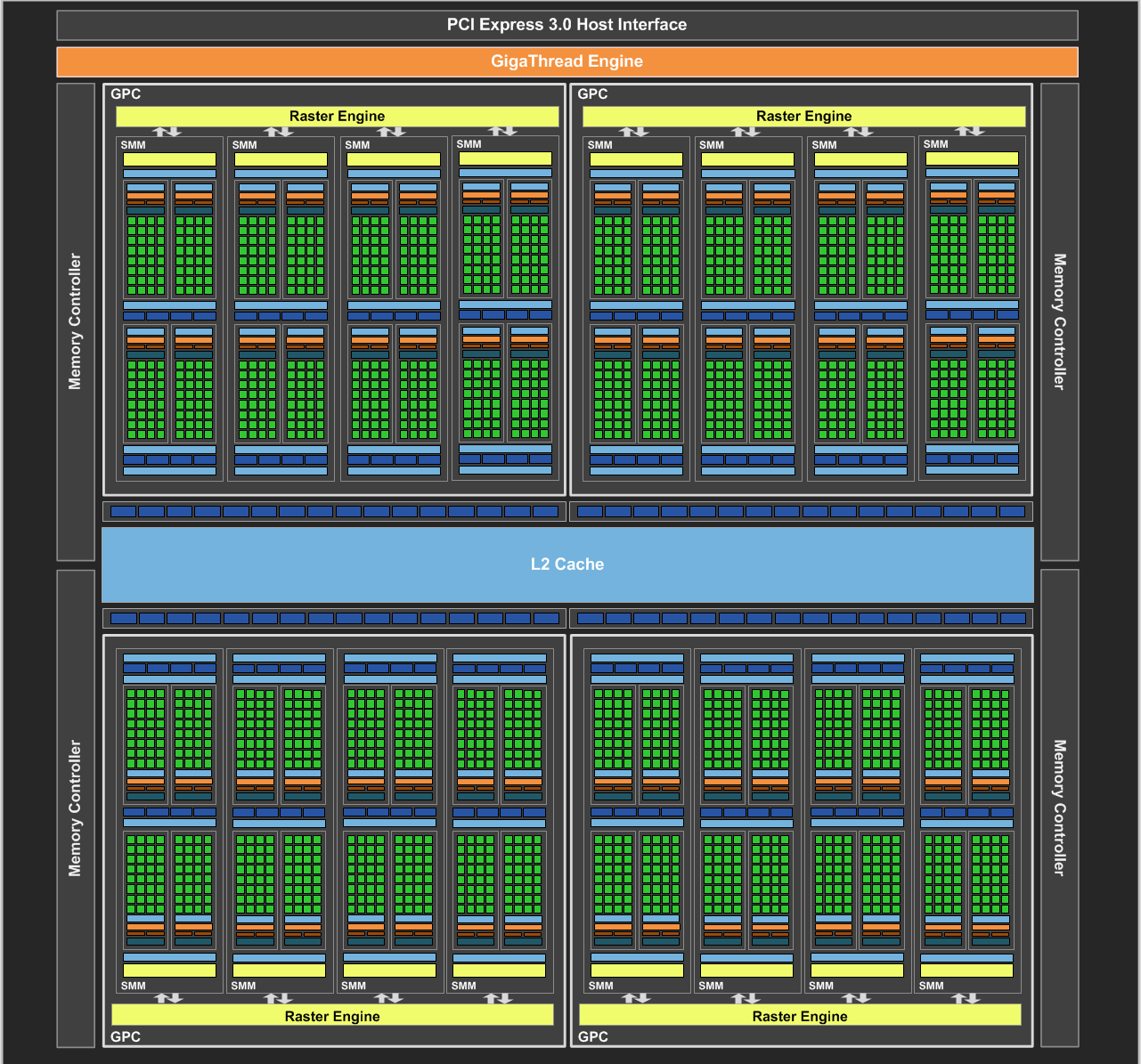

The fundamental structure of GM204 is setup like the GM107 product shipped as the GTX 750 Ti. There is an array of GPCs (Graphics Processing Clustsers), each comprised of multiple SMs (Streaming Multiprocessors, also called SMMs for this Maxwell derivative) and external memory controllers. The GM204 chip (the full implementation of which is found on the GTX 980), consists of 4 GPCs, 16 SMMs and four 64-bit memory controllers.

Each SMM features 128 CUDA cores, or stream processors, bringing the total for this product to 2048. That is significant drop from the 2880 CUDA cores found in the GTX 780 Ti (full GK110 chip) but as you'll soon find out, thanks to the higher clock speeds and performance efficiency changes, the GTX 980 matches or beats the GTX 780 Ti in every test we have run.

The SMM also features an improved Polymorph engine for geometry processing and 8 texture units. All of the above combines for a total of 128 texture units, a 256-bit memory bus, 64 ROPs (raster operators), and 2MB of L2 cache.

The GeForce GTX 680, the GK104 based graphics card that was launched in March of 2012, provides some interesting comparisons. First, the obvious: the GTX 980 will have 33% more processor cores, higher clock speeds, and thus a much higher peak compute rate. Texture unit count remains the same but the doubling of ROP units gives the Maxwell GPU better performance in high resolution anti-aliasing. Memory bandwidth is increased by a modest amount, but NVIDIA has made more enhancements to improve in that area as well with GM204.

Look at those bottom four statistics though: GM204 is 1.66 billion transistors larger and has a 35% larger die size yet is able to quote a TDP that is 30 watts lower than GK104 using the same 28nm process. If you take performance into consideration though, the GTX 980 should be going up against the GK110-based GTX 780 Ti – a GPU that has a 250 watt TDP and a 7.1 billion transistor count (and a die size of 551 mm^2). As we dive into the benchmarks on the following pages, you will gain an understanding of why this is so interesting.

The SMMs of Maxwell are a fundament change when compared to Kepler. Rather than a single block of 192 shaders, the SMM is divided into four distinct blocks that each have a separate instruction buffer, scheduler, and 32 dedicated, non-shared CUDA cores. NVIDIA states that this simplifies the design and scheduling logic required for Maxwell saving on area and power. Pairs of these blocks are grouped together and share four texture filtering units and a texture cache. Shared memory is a different pool of data that is shared amongst all four processing blocks of the SMM.

With these changes, the SMM can offer 90% of the compute performance of the Kepler SM but with a smaller die area that allows NVIDIA to integrate more of them per die. GK104 had 8 SMs (1536 CUDA cores) while GM204 has 16 SMs (2048 CUDA cores) giving it a 2x SM density advantage.

NVIDIA's Jonah Alben indicated to us that the 192-core based SMs used in Kepler seemed great at the time but that they introduced quite a few inefficiencies due to a non-power-of-2 count. It was more difficult for the scheduling hardware to keep the cores full and processing on data and the move to 128-core SMMs helps address this. Speaking of scheduling, there were efficiency changes there as well. The arrangement of instruction scheduling now occurs much earlier in the pipeline, preventing re-scheduling in many instances, helping to keep power down and performance high.

Other than the dramatic changes to the SMM, the 2 MB L2 cache that NVIDIA has implemented on Maxwell is another substantial change. Considering that the Kepler had an L2 cache implementation at 512 KB, we are seeing an 8x increase in available capacity which should reduce the demand on the integrated memory controller of GM204 dramatically.

Texture fill rate between the GK104 and GM204 increases by 12% (thanks to clock speeds, not texture unit counts) though pixel fill rate more than doubles, going from 32.2 Gpixels/s to 72 Gpixels/s on GTX 980.

A 256-bit memory bus might seem like a downgrade for a flagship card as the GTX 780 Ti featured a 384-bit offering (though the GTX 680 featured a 256-bit controller as well). But there are several changes NVIDIA has made to improve memory performance with that smaller bus. First, the clock speed of the memory is now 7.0 GHz and the GM204 cache is larger and more efficient, reducing the number of memory requests that have to be made into DRAM.

Another change is the implementation of a third-generation delta color compression algorithm that attempts to lower the bandwidth required for any single operation. The compression happens both when data is written out to memory and when it is read again for the application, attempting to get as high as 8:1 compression on blocks of matching color value (4×2 pixel regions). The delta color compression compares neighboring color blocks and attempts to minimize the number of bits stored by looking at color differences. Obviously, if the data is very random and cannot be compressed at all, then it will just be written to the memory in a 1:1 mode.

NVIDIA claims that Maxwell requires 25% less memory bandwidth on a per-frame basis when you combine the improved caching and compression techniques on Maxwell. As a result, even though the raw GB/s values of GM204 are only marginally higher than that of GK104, the effective memory bandwidth of the new GTX 980/970 cards will appear much better to developers and in games.

There are other changes in GM204 that do not exist in GM107 to help improve performance of certain features that NVIDIA is bringing to the market. Those help build the foundation for VXGI global illumination and more.

GTX 970 for me! Been waiting

GTX 970 for me! Been waiting for this card. Great review

akin kuya video graphics

akin kuya video graphics

Very nice Ryan. It’s good to

Very nice Ryan. It’s good to see that someone took the time to do SLI benches and framerate pacing.

Thanks its been a pain in the

Thanks its been a pain in the ass and we are still writing stuff up as the article went live. 🙂

I know, the process you gotta

I know, the process you gotta go through to get those FCAT results is a pain, but I must say this puts your reviews and this website well above all others, true enthusiasts will understand and I am now sold.

Sooo. when is 980/1080/1800

Sooo. when is 980/1080/1800 or whatever TI coming out? I need performance more than power efficiency.

I’m guessing that a TI

I’m guessing that a TI version will be pulled out sometime after AMD’s new lineup, that is if their new lineup has a flagship that outperforms the 980.

Probably 6 months from now.

Probably 6 months from now. AMD’s next-gen is supposed to drop in Q1 2015 so we’ll see how much they push things forward.

Nvidia didn’t exert much energy (no pun intended) to beat the 18 month old performance of the 700 Series cards so AMD is in a good spot.

Where AMD will have problems,

Where AMD will have problems, not so much in pricing, but in the thermals that are required for the mini/micro sized systems for HTPC/Etc. that may not be able to take the AMD SKUs even if the prices are lower, getting as much GPU power into as small a form factor as possible is going to be a much more important market segment, as more of these products are being introduced.

Small portable form factor portable mini desktop systems, linked wirelessly to tablets, and relying on the mini desktop for most of the processing needs, are going to appear, systems that can be easily carried around in a laptop bag, along with a tablet, the tablet acting as the desktop host for direct(Via ad hoc WiFi) remote into the mini desktop PC. these type of systems will be more powerful than a laptop(the Mini PC part of the pair), but just as portable, and plugged in at the coffee houses/ETC. and wirelessly serving games, and compute to one, or more tablets. Fitting More powerful discrete GPUs into these systems that will not overburden the limited cooling available in the Mini/Micro form factor will be a big business, especially for gaming/game streaming on the go, and taking these devices along while traveling, and having a device that can be configured to be more like a laptop when on battery power, but ramp up the power beyond what a laptop is capable of while plugged in.

Edit: the tablet acting as

Edit: the tablet acting as the desktop host for direct(Via ad hoc WiFi) remote into the mini desktop PC

to: Edit: the mini PC acting as the desktop/gaming/compute host for direct(Via ad hoc WiFi) remote from the tablet

Got it backwards!

I’m so glad I resisted the

I’m so glad I resisted the urge to not spend 3k on a Titan Z.

So you bought a Titan Z?

So you bought a Titan Z?

you wild be an idiot to spend

you wild be an idiot to spend 3k on titan z when you could get 2 titan blacks for 2k

Pretty sure he was being

Pretty sure he was being sarcastic.

cant help but notice the 770

cant help but notice the 770 has no numbers up. Are those coming still..?

Didn’t really have room for

Didn't really have room for that in my graphs this time around, but I'll consider for the retail card releases for sure. You can estimate how much slower the GTX 770/GTX 680 would be by compare it to the GTX 780.

If nvidia has managed to drop

If nvidia has managed to drop power requirement so much on current 28nm process, I am eagre to see what they will manage to do next year when 20nm process will start. Die shrink is going to make Amd engineers rethink on thier gpu pricing and architecture.

It’s an interesting thought –

It's an interesting thought – though all indications are right now that the 20nm process isn't setup to get higher performance.

It’s nice to see them get the

It’s nice to see them get the performance gains from the 28nm node with some architectural tweaks, that means they will get even more from a process node shrink, whenever the fabs get the 20nm process set up for higher performance. This year and next should see some solid gains, and hopefully less rebranding, as a new round of the AMD verses Nvidia contest begins anew.

Here is what i think is wild

Here is what i think is wild –

The 980 seems to be incredibly “dumbed down” from where it could be if they wanted to max out. They’ve done this before though, right? It basically gives them headroom for when AMD makes their move. Also notice these prices – $560 for the 980 that beats the 780Ti. In reality the parts that the 980 has are actually cheaper than the 780Ti (less cores, less bits,e tc) so the margins for them right now with the 980 are simply going to be amazing. Nvidia is in a good spot – 70% market share and holding the crown for best single GPU with huge margins. If/When they beef up the 980 it will be an absolute beast! Imagine if it was 384 or 512 bit with 2880 cores+.

That 970 is a killer card.

That 970 is a killer card. Wow. Brilliant stuff!

Fantastic article and thanks

Fantastic article and thanks for making a longer video – I’d like to see more video content from PCper! This certainly wasn’t too long, if anything I’d be very interested to see longer videos from PCper in general!

Make sure you subscribe to

Make sure you subscribe to our YouTube channel and keep watching the Pcper.com front page!

Speaking of YouTube, Nvidia

Speaking of YouTube, Nvidia put up a great video explaining VXGI. At first I thought it was something that would make games like Minecraft perform better (which it very well may), but the truth is much cooler:

http://youtu.be/GrFtxKHeniI

Could you imagine if Nvidia

Could you imagine if Nvidia cranked up the TDP? These GPUs would be insanely fast!

Nvidia seems to side on side

Nvidia seems to side on side of being conservative when it comes to their gpu’s, AMD tends to go balls to wall well 2 diff ways of doing things and see long run who hurts most from it.

I’m surprised with the decent

I’m surprised with the decent pricing on the 970. Are you sure this card is actually from Nvidia?

The 970 seems like a sucker

The 970 seems like a sucker punch aimed directly at AMD. Nvidia is happy to aggressively price when they feel it works in their favour.

Economy 101.

Economy 101.

I’m impressed with the

I’m impressed with the overclockability of Maxwell. Although, I wonder if watercooling will make much difference.

that is a very, VERY good

that is a very, VERY good point! this might mean that it will be much easier to get into x2 and x3 SLI!…… Ryan, how in the WORLD did you get your hands on PrecisionX 16!?! EVGA still won’t let people download 15….. please send me a download link :). Best article and video out about this great topic! thanks

Thanks for the compliment but

Thanks for the compliment but sorry, can't share PrecisionX! 🙂

It became available for

It became available for download a couple of days ago. I installed it on my PC last night.

It doesn’t really look like

It doesn’t really look like it has a margin above the 780 Ti from what I can tell. Just less power usage? A lot of the comparisons are just to the standard 780. Am I missing something here or is this not really an upgrade for a 780 Ti (Well, other than the plethora of Displayport connections. Biggest advantage is the extra 1gb of memory I guess, so it should do a bit better on 3840×2160.

Double check bf4 sli 4k frame

Double check bf4 sli 4k frame time graphs. Is orange amd or nvidia?

Yup, fixed!

Yup, fixed!

I thought you were going to

I thought you were going to make donuts?

Gotta hand it to Nvidia for

Gotta hand it to Nvidia for making Maxwell so much more efficient on the same node. This gives some more hope that we’ll finally get some real nice performance improvements in the high end too once the big chips come in, and when they move to 20nm.

The 970 seems like a fantastic deal right now, and certainly AMD has to start scrambling with their lineup. Tonga suddenly doesn’t look that good and probably needs to go down in price very soon, especially if they’re planning on a 285X that’s unlikely to match a 970 in any area. Actually the same is true all the way up to 290X. Nice to see Nvidia put some actual pressure on the competition for once in terms of pricing. Kinda wish they had done the same with the 980, but I guess you take what you can get. I didn’t dare to hope Nvidia would actually want to compete on price.

looking at reviews elsewhere

looking at reviews elsewhere such as Techpowerup.com the benchmarks for the GTX 980 are a bit disappointing on certain games for example Wolfenstein: The New Order,Crysis,Crysis 3 there was very small increases in the frame rate

Bioshock Infinite actually saw a decrease in frames on all resolutions it was just a few frames so in all i am a disappointed

Wolfenstein is unoptimized

Wolfenstein is unoptimized crap also immature drivers

Isn’t Wolfenstein cap at

Isn’t Wolfenstein cap at 60fps?

that also lacks multi GPU

that also lacks multi GPU support not to mention all the performance issues

“very small increases”

“very small increases” compared to what?

Any chance we can see a color

Any chance we can see a color compression comparison ?

Kepler, Maxwell, Tonga. Hawaii

How do you mean? Color

How do you mean? Color compression on the memory bus is always lossless, so there should be no variance in image quality.

So many graphs!

My eye holes

So many graphs!

My eye holes were completely unprepared. You should have warned us about that or something.

But overall, fantastic, review.

Why is the framerating

Why is the framerating comparing 7970 and gtx 680? Am I missing something?

That’s the generic

That’s the generic explanation of framerating and how to interpret the data. It’s been recycled throughout all the reviews since then, as it works the same for all GPUs.

That’s correct – thanks for

That's correct – thanks for commenting for me!

Any plans to update the

Any plans to update the benchmarks with some CUDA or OpenCL testing?

CUDA wise – Blender?

OpenCL – Luxmark?

Or any other non Gaming benchmarks.