Quick Performance Comparison

We used the latest GPUs from AMD and NVIDIA to compare Sniper Elite 3 after the release of Mantle.

Earlier this week, we posted a brief story that looked at the performance of Middle-earth: Shadow of Mordor on the latest GPUs from both NVIDIA and AMD. Last week also marked the release of the v1.11 patch for Sniper Elite 3 that introduced an integrated benchmark mode as well as support for AMD Mantle.

I decided that this was worth a quick look with the same line up of graphics cards that we used to test Shadow of Mordor. Let's see how the NVIDIA and AMD battle stacks up here.

For those unfamiliar with the Sniper Elite series, the focuses on the impact of an individual sniper on a particular conflict and Sniper Elite 3 doesn't change up that formula much. If you have ever seen video of a bullet slowly going through a body, allowing you to see the bones/muscle of the particular enemy being killed…you've probably been watching the Sniper Elite games.

Gore and such aside, the game is fun and combines sniper action with stealth and puzzles. It's worth a shot if you are the kind of gamer that likes to use the sniper rifles in other FPS titles.

But let's jump straight to performance. You'll notice that in this story we are not using our Frame Rating capture performance metrics. That is a direct result of wanting to compare Mantle to DX11 rendering paths – since we have no way to create an overlay for Mantle, we have resorted to using FRAPs and the integrated benchmark mode in Sniper Elite 3.

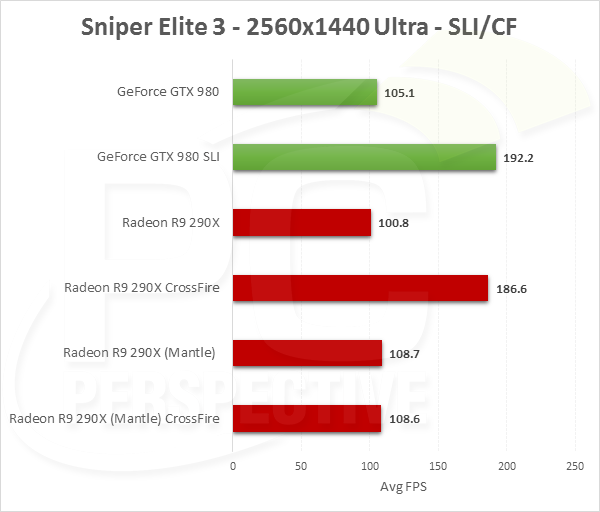

Our standard GPU test bed was used with a Core i7-3960X processor, an X79 motherboard, 16GB of DDR3 memory, and the latest drivers for both parties involved. That means we installed Catalyst 14.9 for AMD and 344.16 for NVIDIA. We'll be comparing the GeForce GTX 980 to the Radeon R9 290X, and the GTX 970 to the R9 290. We will also look at SLI/CrossFire scaling at the high end.

For our testing we used the Ultra in-game preset.

The GeForce GTX 980 is a small margin faster than the R9 290X if we look at the DX11 rendering path on 2560×1440 and matches performance at 3840×2160. But AMD's flagship card is able to gain about 7% performance with the Mantle implementation, helping to put it ahead of the GTX 980. The GTX 970 suffers more or less the same fate: matching or better performance under DX11 but slipping just behind the R9 290 when we enable Mantle in Sniper Elite 3 on the AMD cards.

Sniper Elite 3 scales very well on both AMD and NVIDIA hardware with two GPUs, as you can see in the graphics above. At 4K for example, the GTX 980 brings in just over 55 FPS while a pair of GTX 980s is 85% faster, crossing the 100 FPS mark. AMD's R9 290X actually scales a bit better in DirectX 11 mode, 89% or so. However, Mantle doesn't scale at all with our two Radeon R9 290X cards and the second GPU is more less a bust when it comes to performance increases. Despite the benefit we saw in the single GPU performance results the Mantle API does still struggle with CrossFire implementation.

Both NVIDIA and AMD have great performance with maximum in-game image quality settings in Sniper Elite 3. The GTX 980 and GTX 970 have a small advantage at 2560×1440 but the results are nearly dead-even at 3840×2160 for those of you with 4K monitors sitting on your desks. With Mantle though, Rebellion (the game developer) was able to sneak out a bit more performance, ranging from 7.8% at 2560×1440 down to only 4% at 4K. Those changes give AMD's single GPU results the advantage but we aren't talking about enormous gains; at least not with the CPU+GPU combinations we were using here today.

The lack of multi-GPU support in Sniper Elite 3, and some of those lingering CrossFire issues in other games, still make me question the longer term viability of the API for enthusiasts. But with other games like Civilization: Beyond Earth and Dragon Age: Inquisition coming down the pipe with Mantle API support, I continue to be intrigued by AMD's direction.

So this is what a properly

So this is what a properly optimized game looks like?

mantle has mostly been minor

mantle has mostly been minor gains on intel hardware, it gives best on amd cpus cause they are slower.

wrong!

You left out some very

wrong!

You left out some very important information, but I see why, because you have a very very very bad habit of trying to fling mud on AMD, you are almost as bad as that knucklehead hitmanactua.

It isn’t that Mantle has very minor gains on intel hardware, it is that it has very minor gains on BOTH INTEL AND AMD HIGH-END HARDWARE.

Mantle has most benefit on lower end CPUs, both Intel and AMD, where the cpu is the bottleneck. The way you say it, you make it sound like all of Intel CPUs don’t get any benefit and all of AMD cpus are slow, which is just wrong, wrong wrong!

Maybe, but for this test

Maybe, but for this test system they are using an i7 3960x which is faster than 98% of all gamers CPUs so these charts are pretty relevant to everyone.

No:

It was also important

No:

and

Given the recent price drop

Given the recent price drop on the 290x, would you agree that the 980 is way overpriced?

just new gpu arch vs OLD.

just new gpu arch vs OLD.

GF100 (cut down) – GTX 480 –

GF100 (cut down) – GTX 480 – $499

GF110 (fully enabled) – GTX 580 – $499

GK104 (fully enabled) – GTX 680 – $499

GK110 (cut down) – GTX 780 – $649

GK110 (cut down) – GTX Titan – $999

GK110 (fully enabled) – GTX 780Ti – $699

GK110 (fully enabled) – GTX Titan Black – $999

GM204 (cut down) – GTX 970 – $329

GM204 (fully enabled) – GTX 980 – $549

When all is said and done, adjusting for inflation and taking into account previous release launch prices, the GTX 980 isn’t really overpriced at all. The 970 is just incredibly UNDERpriced. Let’s hope this continues.

No, the 970 is not

No, the 970 is not UNDERpriced. What you said would imply that Nvidia would be losing money by allowing it to be sold at that price(this isn’t sony or M$ selling a console at a loss in hopes to make it up on game sales).

As a consumer, the view should be that at $329, it is closer to what it should be priced at(but still overpriced). 🙂

Hint: The basic cost to make

Hint: The basic cost to make them is not that much different.

>GK104 (fully enabled)

pfff

>GK104 (fully enabled)

pfff hahahahaha

this is nvidia. they will

this is nvidia. they will still sell them anyway. also they just launch 980. dropping the price so soon might not in their best interest. AMD just make a round of price cut. does it include 285?

I’d just like to point out

I’d just like to point out that we’re seeing a relatively modern and good-looking game running at 4K, ultra settings over 100fps… with single-card performance at nearly 60fps. We are living in the future.

(No subject)

Whether intended or not, I

Whether intended or not, I will interpret that to mean the 390X review is almost here.

You have my attention with

You have my attention with that tease…

Ryan gets to play all the

Ryan gets to play all the newest games.

prices are looking good. may

prices are looking good. may have to pick up a 290 me thinks

I just ordered 2 XFX R9 290’s

I just ordered 2 XFX R9 290’s for $269 each.

nice price. Those XFX’s look

nice price. Those XFX’s look pretty damm good.

When I look at power charts

When I look at power charts … I’ll wait to see how 390 turns out.

The big question is when?

The big question is when?

Folks, the point of Mantle is

Folks, the point of Mantle is to largely alleviate a *CPU* bottleneck. It can of course provide some level of GPU optimization, but the gains are really only going to noticeable with lower-end CPU’s combined with midrange + up GPU’s.

Now, you may decide that such a combo may be rare enough due to this potential CPU/GPU imbalance (at least, based on now where games have to deal with DX11’s large overhead) that it’s not worth reviewing on, sure.

But just from a technical curiosity perspective to actually examine the difference Mantle can bring, reviewing it on games already set at very high resolutions with an already-beefy CPU kind of defeats the point – those settings will be bumping up against pixel shading and memory bandwidth limitations before the API can really show any potential optimization benefits.

At the very least if you can’t move to another testing rig with a lower-specced CPU, it would be nice to see some benchmarks at lower resolutions to place the bottleneck more on the CPU and thus (potentially) the API. I fully understand that might not be realistic for your average setup – especially with these GPU’s – and that is one of the reasons Mantle isn’t much of a selling point for DIY PC gamers at the moment.

Just from a tech geek perspective though, it would be nice to see the actual stress points the API is designed to help alleviate tested. Testing the differences between DX11 and Mantle at these settings just doesn’t make much sense for any type of investigation on the supposed efficiency of AMD’s API, you’re basically trying to see what difference lessening the CPU can be witnessed at settings which are GPU bound.

No Mantle XFIRE support?

No Mantle XFIRE support? Sniper 3 launched over 3 months ago! Gamers have all likely beat the game multiple times by now.

LOL mantle this mantle that.

LOL mantle this mantle that. even if you had a CPU that would bottleneck the game you still wont get the same FPS as these tests have shown. Mantle doesnt give big gains on current or older powerhouse CPUs.

That’s because games are

That’s because games are still built around the limitations of d3d 9-11. It would be commercial suicide for a developer to increase the number of draw calls to a level which d3d would not be able to handle.

If the developer increased the lod and draw distance by 2x and the number of objects in the world by 2x, then I would bet you would see a much greater difference even with very high end CPUs. The problem with this is that it would not be very playable on 99% of systems under d3d.

Also, hypothetically, if you had a GPU that is significantly faster than what we have at the moment paired with a current high end CPU you should see a much larger improvement over d3d (CPU progression is much slower than GPU progression performances-wise presently).

So developers use the same assets and don’t increase draw calls, or even provide it as an option, because they don’t want to alienate the majority of their customers who do not have access to lower level APIs(this should change once dx12 is the most used API).

i seen this game,it’s awsome

i seen this game,it’s awsome

hello:

I have a question,that

hello:

I have a question,that you mentioned there is a integrated benchmark mode in the game,where it is?

please give me a E-mail,501210147@qq.com,thank you!