Game Testing – Sniper Elite 4, Strange Brigade, Hitman

Sniper Elite 4 (DX12)

The RX 590 sees double digit percentage growth over every other GPU tested in Sniper Elite 4, 11% over the RX 580 and 15% over the GTX 1060 6GB.

| AMD RX 590 Comparisons, Average FPS, Sniper Elite 4 (DX12) | |||||

|---|---|---|---|---|---|

| MSI RX 580 Gaming X 8GB | EVGA GTX 1060 SSC 3GB | MSI GTX 1060 Gaming X 6GB | |||

| XFX RX 590 FatBoy 8GB | + 11% | + 21% | + 15% | ||

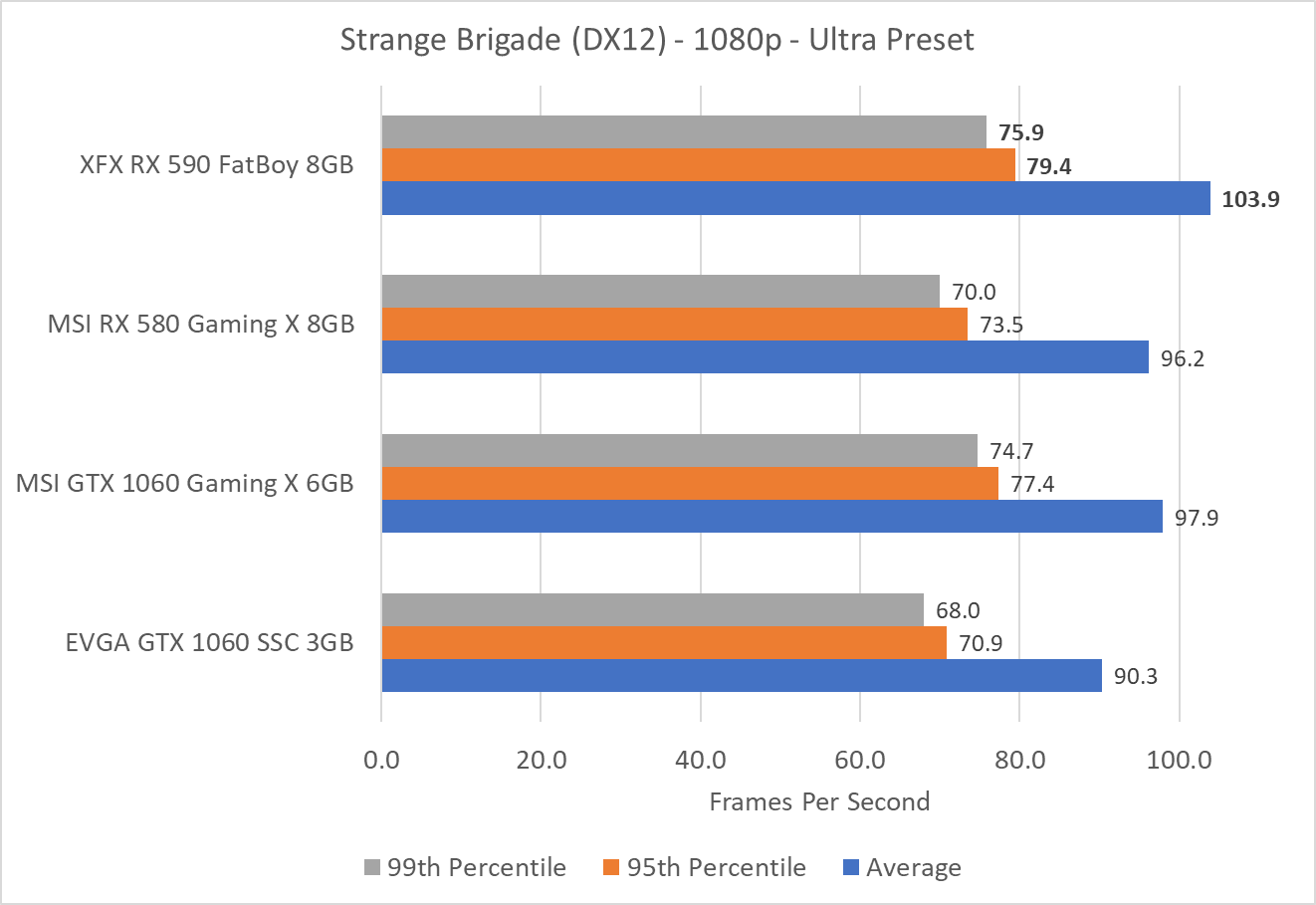

Strange Brigade (DX12)

Strange Brigade provides a 6% and 8% advantage respectively over the GTX 1060 and RX 580 for the new RX 590 GPU.

| AMD RX 590 Comparisons, Average FPS, Strange Brigade (DX12) | |||||

|---|---|---|---|---|---|

| MSI RX 580 Gaming X 8GB | EVGA GTX 1060 SSC 3GB | MSI GTX 1060 Gaming X 6GB | |||

| XFX RX 590 FatBoy 8GB | + 8% | + 15% | + 6% | ||

Hitman (DX12)

Wrapping up our gaming testing, Hitman gives a 13% advantage in average FPS to the RX 590 over the GTX 1060 6GB, and 9% over the RX 580.

| AMD RX 590 Comparisons, Average FPS, Hitman | |||||

|---|---|---|---|---|---|

| MSI RX 580 Gaming X 8GB | EVGA GTX 1060 SSC 3GB | MSI GTX 1060 Gaming X 6GB | |||

| XFX RX 590 FatBoy 8GB | + 9% | + 27% | + 13% | ||

Pass…

Pass…

Why? Radeon RX 590 is faster

Why? Radeon RX 590 is faster than Nvidia RTX 2080Ti in Battlefield V at maximum quality settings (which also means you will not be distracted by mirror like reflections).

thanks for the review, but

thanks for the review, but your MSRP is NOT valid listing, 580 official MSRP was $229 not $219 (very rare even at that, usually $239 or more because 90% of them were “custom” cards from AIB) $279 for the 590 (though likely on the shelf pricing is going to easily breech $300 because AIB always figure they have “the best” so charge that much more just because they can.

it shows when the price on shelf of 570 and 580 9/10 are virtually identical and only now is “almost” where the 570 should be (+ about $30)

Either way, I guess the 12nm did not help much in terms of power consumption, but it made a difference in regards to clock speeds.

makes me really wonder why AMD did not do an entire line refresh (at least 570/580 and vega 56/64) to get their power consumption even better at the SAME speeds

No memory overclocking? What

No memory overclocking? What gives?

Yeah, memory overclocking is

Yeah, memory overclocking is really needed. On my reference RX 480 which barely maintains 1266 Mhz, in most games the memory is a bottleneck. On the 480 I can get a stable 15% overclock on the vram which translates to roughly 8% increase in FPS in most games. Since this has way higher gpu clocks, it is definitely memory bandwidth starved.

I’ll dig into this in the

I'll dig into this in the upcoming days!

Not the biggest of deals, but

Not the biggest of deals, but your chart showes the GTX 1060 3gb as having 6gb of ram. Now I’m no mathamagician, but that seems…. off.

Polaris/RX 590 has an excess

Polaris/RX 590 has an excess of shaders(2304) and a shortage of ROPs(32 With a pixel fill rate of 49.44 GPixel/s) in relation to Nvidia’s GTX 1060-6GB(1280 Shader Cores) which has more ROPs(48 With a pixel fiil rate of 82.03 GPixel/s). Polaris 30 which is mostly a die Shrink/Transistor-Tweaked version on that GF 12nm process. So Polaris 10, 20, 30 is the same GPU design across the board(480, 580, 590) with minor tweaks more towards the Fab Process node and Transistor-Tweaking end on the 590 on GF’s 12nm(1) node. [Note Pixel fill rates, GPixel/s, sourced from TechPowerUp’s GPU database]

It looks like Nvidia obtained that power savings on its GTX 1060 variants at the cost of Shader core counts against AMD’s Polaris variants that have much more Shaders and the higher power usage that comes with that shader core count. That worked out well for Nvidia on DX11 titles but DX12 is now mature and more gaming titles are DX12 optimized. More game titles are DX12 optimized currently that when Polaris was first released and are making more use of DX12(Vulkan also). So the DX12 optimized games may be liking AMD’s more shader heavy Polaris designs now more than ever.

I think that maybe AMD could also be offering a RX 570/12nm binned die variant soon with less shader cores and get the power usage figures down and certianly there will be Polairs 30 DIE Bins on that 12nm process available that do not have enough working shader cores to be used for any RX 590 SKUs.

So AMD should be able to improve its entire polaris product stack via Polaris 30 DIE binning and maybe up the prformance fruther down to the lower end SKUs also. The RX 470/570 variants where always the Price/Performance leaders relative to the higher binned Polaris parts so maybe an RX 570(12nm) die bin that’s better for HTPC/eSports sorts of titles that can fit in the small form factor HTPC boxes better with lower power/thermal requirements but still have enough extra shader cores relative to Nvidia’s Pascal variants.

DX12 looks like it’s being nicer to AMD’s shader heavy Polaris designs what with DX12 able to make better use of Async compute and the gemes developers are making fuller use of DX12(Vulkan Also) where the new APIs have the better ability to fully utilize shaders compared to DX11.

Now for more Polaris undervolting benchmarks to show up along with some DX12 enabled Explicit Milti-GPU Adaptor titles and dual Polaris 590s benchmarked(AOS, other titles SVP). Vulkan Gaming Titles also.

That GF 12nm process did save on power so it gave AMD/AIB partners the option of using the same clocks as the 580 and saving power or higher clocks at the same power usage rates. The AIBs are probably pushing things a little higher and AMD increased the TDP over the 580’s TDP to allow AIB’s more room to try and get higher stable overclocks out of the box. So the AIB’s are the ones that are pushing the power usage up there if they can get better performance and this card does come with an 8 pin and a 6 pin so there is some room for that.

(1)

“VLSI 2018: GlobalFoundries 12nm Leading-Performance, 12LP”

https://fuse.wikichip.org/news/1497/vlsi-2018-globalfoundries-12nm-leading-performance-12lp/

Folks on the interwebs are

Folks on the interwebs are already on about the RX 590’s power usage and blaming that on GCN. Really just look at the damn Shader core count on Polaris 30 and the RX 590 still has 2304 shader cores to the GTX 1060-6GB’s only 1280 shader cores.

So anyone with half a brain will realise that the Polaris 480, 580, and 590 SKUs have almost twice the shader core counts as the GTX 1060-6GB variant. The laws of physics dictate that more shader cores that take more transistors to implement will always use more power.

Maybe AMD is still trying to appeal to the few remaining coin miners out there with that peak compute of 7.1 TFLOPS on the 590. But AMD was on the cheap so maybe there can be an RX 570-12nm variant when the reject die binns begin to fill up with sufficient numbers of Polaris 30 dies that do not have enough working shader cores to be made into RX 590s.

Or better yet you power usage/fan noise crybabies over that the Tech Report’s Blog, just underclock the 590 to the same clock range as the 580 and then you’ll have your power/thermal needs met. Undervolt also and see what you can do with that and underclocking so that any fan noise will not bother your little ears why you are wearing fill over the ear headphones and playing Battlefield whatever.

Blame the extra power uasge on the RX 590’s Die Tapeout and not the 590’s GPU Micro-arch and twice the damn light bulbs/lamps plugged in and running/on are always going the use more power than half the amount.

Or take the total numbers of shader cores and divide that by the average power usage and get a fairer Watts/Shader core metric and that will give you a more accurate metric to judge Polaris/GCN micro-arch’s efficiency.

Really some folks are just that stupid while others are just being disingenuous when the bring up power usage on a GPU with around twice the shader cores and compute as the competing SKU.

You’ve gotta be trolling. How

You’ve gotta be trolling. How you can defend the abysmal results of the 590’s power draw is beyond me.

Seriously buddy, we’re not buying what AMD has you selling. There is just no legitimate excuse for the 590 to draw almost twice as much power as the 1060 and only offer maybe 10% more performance. Keep trying though you are amusing.

If you are looking for low

If you are looking for low power draw get the Nvidia card it has almost half the shader cores and more ROPs to fling those filthy frames out there faster to please gamer Bubba’s fragile ego.

Some Folks do not even care one little bit about gaming they are in it for the FPS rates and the ePeen ego boost.

Some Folks will actually want the shader cores and the higher FP performance on the RX 590.

If you only want to game by all means get the Nvidia cards if you want to mine then get the RX 490 and underclock/undervolt the 590 and get the best MegaHashes/Watt metric. The RX 590 has almost twice the FP compute as the GTX 1060. And if you run the RX 590 at a resonable undervolt and a little lower clock rates you will get the Flops/Watt sweet spot for your compute workload.

I could care less about Gaming Only on a GPU as there are other uses for the RX 590 than only gaming. Some Folks do not care about the power draw and they will overclock and game that way. The selling price on the RX 590s will sill start to go down sooner reather than later once the game bundling deals expire on the RX 590. When the prices start to fall on the RX 590s that will be the time to price out some dual GPU gaming Builds and some DX12/Vulkan games do offer Explicit Multi-GPU-Adaptor ability to game across more than one GPU and that Graphics API/Game based Milti-GPU IP does not make use of CF/SLI.

Hell wait for the RX 570(12nm) variants to arrive and these will come as a result of the imperfect results of the fab process with some Vega 30 dies having too many defective shader cores to be made into a RX 590. So any RX 570(12nm) SKUs will come with less shader cores.

I would be damn happy if AMD took their GPU technology over for the professional market only and made more money that way. But the consumer/gaming market is a great way to earn at lest a little revenues off of those defective DIEs and AMD really needs to focus on the Professional GPU Graphics and Professional Compute/AI markets where the mark-ups are better.

That RX 590 will be purchased by some no matter the power usage but really it cost very little for AMD to reissue this Polaris 30 design on GF’s 12nm node and get the power/thermal advantages of GF’s 12nm Transistor IP that is where all the power savings came from to offer the AIB’s the choice of saving power by running the RX 590 at the same clock speed as the 480 or clocking the RX 590 higher and using more power for those that want better gaming performance.

But you can get yourself a GTX 1060 but that SKU can’t run any of the old Legacy SLI enabled Nvidia Games while the RX 590 will still Run any Legacy CF games. Any newer DX12/Vulkan games that begin to make use of more GPU compute will not run as well on the GTX 1060’s minimal amount of shader cores while the RX 590 will probably still have cores to spare.

You can very well enjoy your GTX 1060 and AMD will make more markup selling professional Vega 20s to the Fast Growing Compute/AI market. But Even Nvidia has gotten burned by the coin mining market! That and not including more ROPs on its Turing Die Tapeouts above what the Pascal Die Tapeoits offered for a gaming market that’s still majority raster oriented and makes no large use of the RTX/Turing’s Ray Tracing IP. The Professional Graphics folks will like RTX/Turing’s Ray Tracing more but that’s not necessarily needing real time ray tracing. Ditto for Nvidia’s tensor core based AI upscaling an IP that AMD’s semi-custom console APU clients are likely to be wanting for the next generation of Console SKUs.

Nvidia really screwed the pooch by not increasing the ROP counts on its first generation Turing Die Tapeouts to get a little higher FPS rates on the current gaming titles that are all still very much raster oriented.

Hi Ken, power supply graph is

Hi Ken, power supply graph is duplicated instead of showing power split between sources. 🙂

While the main truth is that

While the main truth is that i wish Jeremey would stop writing for PCPER altogether, the thing i want to bring up is not quite as aggressive. I want to say that Jeremey should not be writing 2 BS articles about the RX 590 on the day of the RX 590 launch to bury the PCPER review and push it toward the bottom. This is basic common sense – you want your visitors staying on your website and long as possible and that means making it as easy for them to find the information they are here for. There are a lot of impatient people out there that could come to your site looking for your review only to see 2 BS articles from Jeremey and move on to the next site instead. Even worse, his articles are telling people to go to OTHER websites other than PCPER, so someone might read his article, click his link to go read someone elses review, and then never read yours. Absolute stupidity, killing your own company.

Sadly, my master plan

Sadly, my master plan continues to fail after 17 years of trying. Hell, people can't even remember how to spell my name!

Well, it seems you’ve been

Well, it seems you’ve been asked to leave. I’ll miss you Jaremey Hailstorm

Can you actually point to

Can you actually point to something that is “BS”? or you just flaming your frustration?

I’d really like to understand what specific is “BS”, so far the only thing I see is your comment..

Also the XFX 590 review was done by Ken, not Jeremy. The other 590 review was done by Jeremy..

Slight update to my comment,

Slight update to my comment, Jeremy didn’t do any 590 review, just pointed to HardOCP’s review.

So still trying to find the “BS” you mentioned.. but nada

product is so boring we can’t

product is so boring we can’t even get a good flame war going in the comments

That is the meanest thing I

That is the meanest thing I have heard in ages … kudos to you.

this to me was a bad move on

this to me was a bad move on amd. it may have been easier to make a vega card a tad faster than the 1060 6gb. it could have been named the Vega 28. why squeeze juice out of a card that pretty much has not more headroom for higher clocks? Then again i dont know what AMD has been thinking about lately. Then again, every move AMD makes, intel or nvidia has to adjust. this is good for those fans.

Yes but they still want to

Yes but they still want to give GlobalFoundries some wafer action to keep the current wafer supply agreement up.

So if you take the time to read the Wikichip FUSE(on GF’s 12nm node) article refrenced in another post on this forum, that GF 12nm node has the exact same BEOL(Back End Of Line)Metal Pitch as the GF(Linecsed From Samsung) 14nm process! So it’s very easy for AMD to keep the orginal FEOL(Front end of line) Layout but still take advantage of GF’s new 12nm improced Transistor Resistence/Leakage metrics that are precisely why AMD is getting the higher clocks and/or better power usage metrics on Polaris 30.

So under the GF 12nm node the previous 14nm customers can switch over almost effortlessly to GF’s 12nm node. AMD can keep its current wafer agreement and still not have to pay GF for any 7nm TSMC production because GF gave up on the 7nm node race.

AMD sources the Epyc/Rome 14nm I/O DIE production from GF while the Epyc/Rome compute(CPU) DIEs at 7nm will come from TSMC. I/O and anything analog does not scale as well at smaller nodes so at 14nm GF has some SerDes IP that’s mature on that 14nm process, ditto for other SerDes IP suppliers and that mature 14nm Samsung(licensed by GF) node. So making use of already tested vetted/certified 14nm SerDes IP at 14nm is a great cost/fabrication cost savings for AMD and plenty of business for GF at 14nm and 12nm.

AMD gets plenty of advantages making use of 14nm and 12nm production capacity from GF while TSMC gets only the necessary Zen-2 cores parts(Die/Chiplets) done under its 7nm process. And looking at how small the Zen-2 Epyc/Rome CPU Die/Chiplets are without any SOC-I/O logic taking up space AMD will be getting plenty of 80%+ Die/Wafer yields from that TSMC 7mn node as well. I’ll bet that AMD can fit about almost 4 times the Zen-2 Epyc/Rome Die/Chiplets per wafer at TSMC’s 7nm node size.

SerDes is what is used by all the processor makers for CPU/GPU/Other processor connection fabrics and one of the great things about AMD’s Milti-Chip-Module construction is that different DIEs can make use of Different Process nodes that are best suited for the specific task and maybe one day one of those DIEs on the MCM will be a GPU die to go along with the CPU DIEs and I/O die, even FPGA/DSP DIEs also.

All AMD has been thinking about lately is CPU and GPUs for the Data Center market where AMD can get a whole lot more markup than most consumer/gamers are willing to pay. And AMD has no intention of investing any unnecessary money on Flagship gaming where the little markups do not justify the R&D and onther costs and the Flagship GPU’s have lower sells volumes relative to the mainstream GPU market’s unit sales numbers. How many times now has Lisa Su stated that AMD is only going to be producing for the mainstream GPU market on the consumer side.

Until AMD gets a scalable GPU die system in place like it has for its Zen CPU lines, AMD will limit itself to monolothic Mainstream GPU die/tapeouts for desktop gaming. And Navi is not developed to the point whare the Navi Dies can be made very small and then scaled on an interposer/MCM like with Zen on that MCM.

What Gamers can hope for is that AMD will at least be able to create some Dual Navi DIEs on one PCIe card variants with midrange NAVI DIEs that are wired up acrosss a single PCIe card’s PCB via AMD’s Infinity Fabric IP instead of using PCIe for the on PCIe card interface. Dual GPU Dies on a single PCIe card is where both AMD and Nvidia can make use of their respective Infinity Fabric and NVLink IP to better interface up at leat 2 GPU DIEs on one PCIe card variants and have those Dual GPU variants appear to software like one larger logical GPU.

Both AMD and Nvidia have that IP worked out and it’s Just that The Smaller Modular GPU DIE based IP is still some years off owing to the complexities of the inter-GPU mesh connection topology IP that’s still not ready for production. But that Dual GPU DIE on one PCIe card option is still a much simpler to manage task that can be quickest to market and that could get AMD some Flagship gaming options sooner rather than later based on using Dual Midsize Navi DIEs.

But really until AMD’s Professional GPU market sales take off and are used to pay for the majority of AMD’s initial GPU R&D investments, I would not expect AMD’s RTG to have the funds to compete in the Flagship GPU market. That’s How Nvidia funds the majority of its Gaming GPUs using technology that had its R&D cost already paid for by the professional market sales. Let’s see how much Vega 20 Pro Market sales can help RTG get the funds to compete better with Nvidia in the gaming GPU market. And Folks it ain’t happening without the money no way and no how, and AMD’s CPU division is not going to be sharing too much there with RTG because AMD’s CPU division already has to compete with Intel and their Wads-O-Dosh.

Edit: improced

To: improved

Edit: improced

To: improved

I would have liked to see how

I would have liked to see how the Vega 56 stacked up against the RX590. Anyone have analysis on how the 590 compares to the 56 ??

The Vega 56 is better and you

The Vega 56 is better and you can wait for Navi also but Navi is for mainstream gaming only as AMD has stated. The one good thing to come from AMD not competing as much with Nvidia on Gaming GPUs, that will also benefit AMD at a later time, is that New GPU generation ASPs will be much higher. And That’s thanks to Nvidia’s much higher Turing Pricing! So AMD has the opportunity to price its Navi offerings a little higher and still undercut Nvidia’s pricing.

Lisa Su says: Good Work JHH on setting your Turing MSRPs so high so we at AMD can charge a little more for Navi when it arrives!

Quite a few comparisons

Quite a few comparisons around the web, Vega 56 easily outperforms the 590

https://techreport.com/review/34260/amd-radeon-rx-590-graphics-card-reviewed/2

On the plus side, I still do

On the plus side, I still do not have a compelling price/performance reason to upgrade from the RX480. Two years on, and we are only just getting back down to the same ratio! This looks like it will be a good long cycle before a refresh is needed.

High, guys! I wonder where

High, guys! I wonder where did 230 watts of consumption come from? The videocard consumes 206 watts maximum!

http://www.hardwareluxx.ru/index.php/artikel/hardware/grafikkarten/45927-radeon-rx-590-test.html?start=6

Downvolting will give consumption less than 200 watts…